JUNOS & ME

Networking and Troubleshooting

Technical blog of @DOOR7302

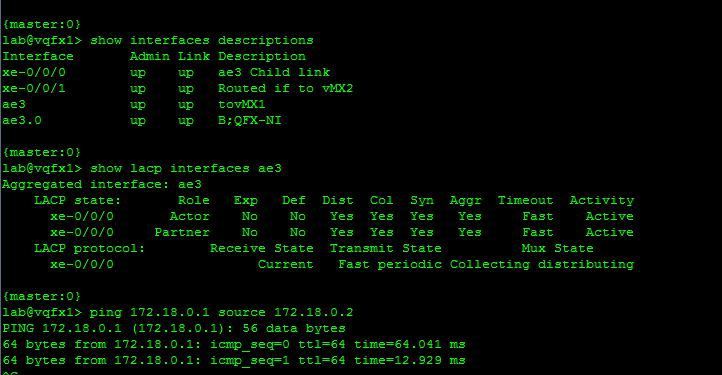

As I'm currently studying DC certification track, I decided to setup my first vQFX lab. My current multi-vendors virtual LAB runs on ESXi, so I wished to keep my VMware hypervisor also for vQFX instances. I'm a lucky man, vmdk images for vQFX are now available.

Here is a short post to explain how to install and run vQFX 10k on ESXi 5.5.

First you need to download vQFX. Trial version can be retrieved there

The step by step procedure is the following:

1/ Download vmdk images. One is for vQFX Routing Engine and the other one for vQFX PFE.

2/ Upload vQFX RE and PFE vmdk into your datastore

3/ Convert vmdk images before using them. Open your ESXi terminal - go to your volume and folder which hosts the previous uploaded images:

vmkfstools -i vqfx10k-re-15.1X53-D60.vmdk vqfx10kRE.vmdk -d thin -a buslogic

vmkfstools -i vqfx10k-pfe-20160609-2.vmdk vqfx10kPFE.vmdk -d thin -a buslogic

4/ Then create the 2 VM.

Create RE VM:

Create PFE VM:

5/ Attached the 1th NIC of both VMs to your out of band virtual switch.

6/ Create a new virtual switch for inter-VM communication (RE <> PFE). Enable promiscuous mode and mtu = 9000. Then attached the 2nd NIC of both VMs to this newly virtual switch.

7/ Attached extra data plane NICs (of the VM RE) to other virtual switchs connected, for instance, to a physical NIC or another VM (such as another vQFX or a vMX).

8/ Run both VMs

9/ Use the ESXi console to access to the vQFX RE:

Default login to access to the RE VM is:

login : root

pwd : Juniper

Then type: cli

Add configuration for root & other users and em0 configuration for out of band management.

10/ Configure your data plane interfaces. Starting from xe-0/0/0 up to xe-0/0/11.

11/ Then, Enjoy switching ;)

David.

I was wondering if I can use the embedded tcpdump of Junos to monitor transit traffic.

I found a way to do it and this short post explains how to do that.

This tip works only on TRIO Line cards. My setup has been tested on Junos 12.3.

I used several features:

First of all, you need to find a free port on your chassis :) - not used - not connected - and configure it in loopback mode. This port may be down. Moreover configure on it a fake IP address with a fake next-hop (fake arp / mac entry).

set interfaces xe-8/0/0 gigether-options loopback

set interfaces xe-8/0/0 unit 0 family inet address 192.168.1.1/24 arp 192.168.1.2 mac 00:00:00:01:02:03

Then you can configure your port mirroring instance and choose the previous configured interface as the output interface for mirrored traffic. Here I configure a specific port-mirroring-instance on my MPC that receives the transit traffic.

set chassis fpc 4 port-mirror-instance LOCAL-DUMP

Then I set up my port-mirroring instance. Don't forget the no-filter-check knob

set forwarding-options port-mirroring instance LOCAL-DUMP input rate 1

set forwarding-options port-mirroring instance LOCAL-DUMP family inet output interface xe-8/0/0.0 next-hop 192.168.1.2

set forwarding-options port-mirroring instance LOCAL-DUMP family inet output no-filter-check

And finally apply mirroring (here in input direction) on interface you want to catch specific transit traffic. Here I want to punt to my local tcpdump the traffic sent to IP 60.0.3.1/32 on TCP port 80.

set firewall family inet filter MIRROR term 1 from destination-address 60.0.3.1/32

set firewall family inet filter MIRROR term 1 from protocol tcp

set firewall family inet filter MIRROR term 1 from port 80

set firewall family inet filter MIRROR term 1 then port-mirror-instance LOCAL-DUMP

set firewall family inet filter MIRROR term 1 then accept

set firewall family inet filter MIRROR term 2 then accept

set interfaces ae0 unit 0 family inet filter input MIRROR

At this step you can check that port-mirroring instance is UP.

sponge@bob> show forwarding-options port-mirroring

Instance Name: LOCAL-DUMP

Instance Id: 2

Input parameters:

Rate : 1

Run-length : 0

Maximum-packet-length : 0

Output parameters:

Family State Destination Next-hop

inet up xe-8/0/0.0 192.168.1.2

And also you can see stats traffic on the output interface xe-8/0/0 (remember this interface is in loopback mode)

interface: xe-8/0/0, Enabled, Link is Up

Encapsulation: Ethernet, Speed: 10000mbps

Traffic statistics: Current delta

Input bytes: 2594148207030 (0 bps) [0]

Output bytes: 2824068833619 (1168363304 bps) [1161604288]

Input packets: 1958284956 (0 pps) [0]

Output packets: 2411685324 (268465 pps) [2135302]

No worries! Here, the 268k pps are actually dropped at PFE level as normal discard without any impact.

sponge@bob> show pfe statistics traffic fpc 8 | match Normal

Normal discard : 1202137974

Now it's time to play with “exceptions”. The aim is to say to the PFE attached to output interface xe-8/0/0: "punt" some packets, discarded on xe-8/0/0, to the RE.

To do that I used an exception named as host-route-v4. This exception is triggered when a packet need to be routed by the RE (RIB). Actually it's never used in normal condition (or in rare cases). This exception is by default rate-limited to 2000pps. For security purposes I preferred to rate-limit this exception to 100pps for the MPC that hosts the output interface. Here the MPC in slot 8. I used a scale bandwidth of 5% of 2000pps to obtain my 100pps allowed for MPC 8.

To do that I add some configurations at ddos-protection level for this specific exception:

set system ddos-protection protocols unclassified host-route-v4 fpc 8 bandwidth-scale 5

Now to force host-routed, I created a new firewall filter with an action "next-ip" set to the local ip address of my output interface xe-8/0/0.

set firewall family inet filter to_DUMP term 1 then next-ip 192.168.1.1/32

And finally I applied this filter on the output interface xe-8/0/0:

set interfaces xe-8/0/0 unit 0 family inet filter input to_DUMP

After you have committed. You should see a DDOS-protection warning in syslog

Mar 17 11:07:01 bob jddosd[1882]: DDOS_PROTOCOL_VIOLATION_SET: Protocol Unclassified:host-route-v4 is violated at fpc 8 for 6 times

No worries again. We rate-limit this exception at 100pps (scale bandwidth of 5%).

sponge@bob> show ddos-protection protocols violations

Packet types: 190, Currently violated: 1

Protocol Packet Bandwidth Arrival Peak Policer bandwidth

group type (pps) rate(pps) rate(pps) violation detected at

uncls host-rt-v4 2000 0 0 2015-03-17 09:45:27 CET

Detected on: FPC-8

Important: if you have a firewall filter applied on your lo0 to protect your RE and if this firewall filter has a final term that discards all unauthorized traffic you should deactivate temporally this filter or term to allow punted traffic.

Now, let’s monitor traffic of interface xe-8/0/0 (our output interface) :

sponge@bob> monitor traffic interface xe-8/0/0.0 no-resolve

verbose output suppressed, use <detail> or <extensive> for full protocol decode

Address resolution is OFF.

Listening on xe-8/0/0.0, capture size 96 bytes

11:08:39.313460 In IP 161.0.0.1.60 > 60.0.3.1.80: . 0:504(504) win 0

11:08:39.313586 In IP 161.0.0.1.61 > 60.0.3.1.80: . 0:504(504) win 0

11:08:39.313589 In IP 161.0.0.1.64 > 60.0.3.1.80: . 0:504(504) win 0

11:08:39.313592 In IP 161.0.0.1.65 > 60.0.3.1.80: . 0:504(504) win 0

11:08:39.313594 In IP 161.0.0.1.62 > 60.0.3.1.80: . 0:504(504) win 0

11:08:39.313596 In IP 161.0.0.1.644 > 60.0.3.1.80: . 0:504(504) win 0

11:08:39.313598 In IP 161.0.0.1.633 > 60.0.3.1.80: . 0:504(504) win 0

Sounds good! You can now use all matching criteria of the Junos tcpdump to analyze packets. Remember packets are rate-limited at 100pps, so you shouldn’t see the entire stream.

sponge@bob> monitor traffic interface xe-8/0/0.0 no-resolve matching "tcp src port 60" size 1500 detail print-ascii

Address resolution is OFF.

Listening on xe-8/0/0.0, capture size 1500 bytes

11:10:55.791814 In IP (tos 0x0, ttl 14, id 0, offset 0, flags [none], proto: TCP (6), length: 544) 161.0.0.1.60 > 60.0.3.1.80: . 0:504(504) win 0

0x0000 0200 0000 4500 0220 0000 0000 0e06 cad6 ....E...........

0x0010 a100 0001 3c00 0301 003c 0050 0000 0000 ....<....<.P....

0x0020 0000 0000 5000 0000 f666 0000 4745 5420 ....P....f..GET.

0x0030 2f64 6f77 6e6c 6f61 642e 6874 6d6c 2048 /download.html.H

0x0040 5454 502f 312e 310d 0a48 6f73 743a 2077 TTP/1.1..Host:.w

0x0050 7777 2e65 7468 6572 6561 6c2e 636f 6d0d ww.ethereal.com.

0x0060 0a55 7365 722d 4167 656e 743a 204d 6f7a .User-Agent:.Moz

0x0070 696c 6c61 2f35 2e30 2028 5769 6e64 6f77 illa/5.0.(Window

0x0080 733b 2055 3b20 5769 6e64 6f77 7320 4e54 s;.U;.Windows.NT

0x0090 2035 2e31 3b20 656e 2d55 533b 2072 763a .5.1;.en-US;.rv:

0x00a0 312e 3629 2047 6563 6b6f 2f32 3030 3430 1.6).Gecko/20040

0x00b0 3131 330d 0a41 6363 6570 743a 2074 6578 113..Accept:.tex

0x00c0 742f 786d 6c2c 6170 706c 6963 6174 696f t/xml,applicatio

Notice 1: Packets are only punted to RE when you call tcpdump command. You can check punted packets here:

sponge@bob> show pfe statistics traffic fpc 8 | match local

Packet Forwarding Engine local traffic statistics:

Local packets input : 1164 <<<<

Local packets output : 0

Notice 2: You can do the same for IPv6 traffic with exception host-route-v6.

David

Here it's a short post to explain how you can monitor the control plane activity with ddos-protection's statistics and a simple op-script.

ddos-protection is a default feature only available on MPC cards which allows to secure the linecard's CPU and the Routing-engine's CPU. ddos-protection maintains per protocol, and for some protocols per packet-type, the current and maximum arrival packet rates. Statistics are available per MPC and per chassis (RE point of view).

Sample cli output for ICMP protocol :

sponge@bob> show ddos-protection protocols icmp statistics

Packet types: 1, Received traffic: 1, Currently violated: 0

Protocol Group: ICMP

Packet type: aggregate

System-wide information:

Aggregate bandwidth is no longer being violated

No. of FPCs that have received excess traffic: 1

Last violation started at: 2014-11-21 11:20:33 CET

Last violation ended at: 2014-11-21 11:20:39 CET

Duration of last violation: 00:00:06 Number of violations: 1

Received: 55403 Arrival rate: 0 pps

Dropped: 7 Max arrival rate: 48 pps

Packet-type "aggregate" means "all packet types". Actually, this is the sum. The Max arrival rate is the maximum rate observed since the last clear of the statistics or the last reboot.

I developed a simple op script that displays per protocol/packet-type the current and max observed rates of the routing-engine. Only packet-types with a Max Arrival Rate upper than 0 are displayed.

This command allows you to monitor your control plane in real time and can help you to tune your ddos policers.

Here the chechcp.slax code :

version 1.0;

ns junos = "http://xml.juniper.net/junos/*/junos";

ns xnm = "http://xml.juniper.net/xnm/1.1/xnm";

ns jcs = "http://xml.juniper.net/junos/commit-scripts/1.0";

import "../import/junos.xsl";

/*------------------------------------------------*/

/* This the version 1.0 of the op script checkcp */

/* Written by David roy */

/* door7302@gmail.com */

/*------------------------------------------------*/

match / {

<op-script-results> {

/* Take traces */

var $myrpc = <get-ddos-protocols-statistics> {};

var $myddos = jcs:invoke ($myrpc);

/* Now Display */

<output> "";

<output> "";

<output> "+-------------------------------------------------------------------------+";

<output> jcs:printf('|%-20s |%-20s |%-11s |%-10s\n',"Protocol","Packet Type","Current pps","Max pps Observed");

<output> "+-------------------------------------------------------------------------+";

for-each( $myddos/ddos-protocol-group/ddos-protocol/packet-type ) {

var $name = .;

if (../ddos-system-statistics/packet-arrival-rate-max != "0"){

<output> jcs:printf('|%-20s |%-20s |%-11s |%-10s\n',../../group-name,$name,../ddos-system-statistics/packet-arrival-rate,../ddos-system-statistics/packet-arrival-rate-max);

}

}

<output> "+-------------------------------------------------------------------------+";

}

}

Just copy/paste the code above in /var/db/scripts/op/checkcp.slax file. Then enable the script by adding this configuration:

edit

set system scripts op file checkcp.slax

commit and-quit

Finally play with the op-script:

sponge@bob> op checkcp

+-------------------------------------------------------------------------+

|Protocol |Packet Type |Current pps |Max pps Observed

+-------------------------------------------------------------------------+

|ICMP |aggregate |0 |48

|OSPF |aggregate |0 |2

|PIM |aggregate |0 |2

|BFD |aggregate |0 |11

|LDP |aggregate |0 |3

|BGP |aggregate |1 |17

|SSH |aggregate |3 |249

|SNMP |aggregate |0 |130

|LACP |aggregate |1 |2

|ISIS |aggregate |0 |5

|Reject |aggregate |0 |88080

|TCP-Flags |aggregate |6 |163

|TCP-Flags |initial |0 |1

|TCP-Flags |established |6 |163

|PIMv6 |aggregate |0 |1

|Sample |aggregate |0 |7431

|Sample |host |0 |7431

+-------------------------------------------------------------------------+

David.

Read my blog regarding my favorite protocol "ISIS" on The Inetzero blog and prepare your JNCIE exam with their materials:Workbooks and Racks.

More regarding Inetzero click there :

David

Read my blog regarding the PIM Anycast RP feature on The Inetzero blog and prepare your JNCIE exam with their materials:Workbooks and Racks.

More regarding Inetzero click there :

David

uRPF allows anti-spoofing embedded at forwarding plane level. Junos provides this feature for many years with several modes and options:

I carried out several tests on MX960 version 12.3R2 with DPC or MPC cards to test all the possible combinations of uRPF options.

1/ Explanations of Modes & options

Modes for DPC:

Strict mode:

Loose Mode:

Modes for MPC:

Strict mode:

Loose Mode:

Option 1 (for DPC and MPC):

By default uRPF uses only the best path. Indeed, within the FIB only the best paths are available except if you use features like FRR (Fast ReRoute) LFA (Loop Free Alternate). Otherwise, there is no backup path in the FIB, only the RIB keeps those information.

This default behavior is called “active-paths”. To take into account the backup paths at FIB level, known in the RIB, you need to activate the option “feasible-paths”. This feature pushes in the FIB uRPF database, the active path but also the backup path(s) .

Note: this feature requests a little bit more memory at PFE level (adds ifl-list-nh entries)

Option 2 (only for MPC):

Routes with a next-hop set to DISCARD are by default not used on DPC and MPC. But for MPC only you can add to the uRPF database the “discard” routes by using a knog called rpf-loose-mode-discard. This is a global knob that has only a meaning in Loose Mode. (No effect in Strict mode)

Option 3 (for DPC and MPC):

The third option concerns the action applied to packets failing uRPF check. By default those packets are silently discarded but on Junos you can also apply a specific firewall filter to manage those packets (ex. Modify Forwarding Class, rate-limiting, are anything else allowed by a Firewall Filter).

As we can see, there are a lot of combinations. I tried to establish a matrix of tests to cover the entire combinations of Modes, Options, and type of Cards.

2/ uRPF configuration

Modes configuration: This is a per-interface statement.

Strict mode configuration:

edit exclusive

set interfaces aeXX.0 family inet rpf-check

or

set interfaces xe-x/x/x.0 family inet rpf-check

commit

Loose mode configuration:

edit exclusive

set interfaces aeXX.0 family inet rpf-check mode loose

or

set interfaces xe-x/x/x.0 family inet rpf-check mode loose

commit

uRPF failure action: This is a per-interface statement.

edit exclusive

set interfaces aeXX.0 family inet rpf-check fail-filter <MyFilter>

or

set interfaces xe-x/x/x.0 family inet rpf-check fail-filter <MyFilter>

commit

Options configuration: Global configuration.

Take into account only Active Path (default):

edit exclusive

set routing-options forwarding-table unicast-reverse-path active-paths

commit

Take into account Active Path and Backup paths (pushes backup paths to the FIB – actually create new ifl-list-nh):

edit exclusive

set routing-options forwarding-table unicast-reverse-path feasible-paths

commit

For MPC only ; take into account also routes with a Discard NH; (here for inet routes):

edit exclusive

set forwarding-options rpf-loose-mode-discard family inet

commit

3/ uRPF troubleshooting

Verify the uRPF configuration and uRPF counters:

sponge@bob> show interfaces ae40.0 extensive | match rpf

Flags: Sendbcast-pkt-to-re, uRPF, uRPF-loose, Sample-input

RPF Failures: Packets: 9950, Bytes: 11745652

A little bit more info at PFE level:

sponge@bob> show interfaces ae41.0 | match Index

Logical interface ae41.0 (Index 332) (SNMP ifIndex 833)

sponge@bob> start shell pfe network fpc4

NPC4(bob vty)# show route rpf loose-discard-mode proto ip

RPF loose-discard mode for IPv4: Enabled

Platform support: Yes, Walk RTT: No

NPC4(bob vty)# show route rpf iff ifl-index 332 proto ip

RPF mode: Loose

Fail filter index: 0

Bytes: 0

Pkts: 0

To know which RPF interfaces are used to perform Reverse Lookup, let’s take one simple example:

This route has 2 paths, one active path through ae40.0 and a backup path through ae41.0.

sponge@bob> show route 1.0.0.0/30

inet.0: 905188 destinations, 1351252 routes (905178 active, 13 holddown, 0 hidden)

+ = Active Route, - = Last Active, * = Both

1.0.0.0/30 *[BGP/170] 17:29:36, localpref 0, from 192.168.37.1

AS path: I, validation-state: unverified

> to 81.253.193.9 via ae40.0

[BGP/170] 16:35:47, localpref 0, from 192.168.37.2

AS path: I, validation-state: unverified

> to 81.253.193.18 via ae41.0

Strict mode is configured on each interface and only active paths are used by uRPF.

sponge@bob> show route forwarding-table destination 1.0.0.1/32 extensive table default

Routing table: default.inet [Index 0]

Internet:

Destination: 1.0.0.0/30

Route type: user

Route reference: 0 Route interface-index: 0

Flags: sent to PFE

Next-hop type: indirect Index: 1048584 Reference: 200001

Nexthop: 81.253.193.9

Next-hop type: unicast Index: 595 Reference: 16

Next-hop interface: ae40.0

RPF interface: ae40.0

You can see that only ae40.0 is allowed to receive datagrams with @IP Source equal to 1.0.0.1/32. Now we configure the feasible-path feature. The 2 paths (active and backup) should be allowed to receive traffic coming from the 1.0.0.1/32. Let’s check :

sponge@bob> show route forwarding-table destination 1.0.0.1/32 extensive table default

Routing table: default.inet [Index 0]

Internet:

Destination: 1.0.0.0/30

Route type: user

Route reference: 0 Route interface-index: 0

Flags: sent to PFE

Next-hop type: indirect Index: 1048584 Reference: 200001

Nexthop: 81.253.193.9

Next-hop type: unicast Index: 595 Reference: 16

Next-hop interface: ae40.0

RPF interface: ae40.0

RPF interface: ae41.0

Cool, now the backup interface is also allowed to receive traffic coming from 1.0.0.1 (Nice for multi-homing )

4/ uRPF IPv4 test results

The 2 next tabs sumarize the tests carried out on MPC and DPC. Hereafter the explanation of each column:

MPC test results:

DPC test results:

David.

Recently, we received in LAB 2 new MPC cards:

- The MPC4e Combo card: 2x100GE + 8x10GE ports (MPC4E 3D 2CGE+8XGE)

- The MPC4e 32x10GE ports (MPC4E 3D 32XGE)

These 2 new cards need at least the Junos 12.3 and can be used on both dense chassis: MX960 and MX2000 chassis. Here, I only present you MPC4e on MX960 chassis.

I played with these 2 new cards to better understand how packets are managed at PFE level. Here after, my analysis:

Introduction – MPC4e on MX960:

To work, MPC4e cards need at least SCB-E fabric cards. Remember that an MX960 can host 3 SCB-E cards and each of them have 2 Fabric Planes. In normal configuration, SCBs work in 2+1 mode (aka. redundancy mode). In this configuration, each PFE has got active high speed links towards 4 planes and 2 other paths towards the 2 standby planes hosted by the standby SCB-E.

In this configuration (2+1), SCB-E is a real bottleneck and a new feature has been introduced to increase the fabric bandwidth and switch the fabric in 3+0 mode (no more redundancy). To recover the fabric redundancy we have to wait the third generation of SCB, named SCB-E2.

However, fabric components are more "basic" than PFE ASICs and therefore are very stable components. I’ve rarely seen SCB crash. But it could happen, and a good usage of the CoS can preserve critical traffic to be dropped when chassis encounters a fabric failure (play with loss priorities is recommended)

To enable the 3+0 SCB-E mode, apply this configuration:

set chassis fabric redundancy-mode increased-bandwidth

After that, the 6 planes of the chassis become active (Online):

sponge@bob> show chassis fabric summary

Plane State Uptime

0 Online 13 hours, 23 minutes, 21 seconds

1 Online 13 hours, 23 minutes, 16 seconds

2 Online 13 hours, 23 minutes, 11 seconds

3 Online 13 hours, 23 minutes, 6 seconds

4 Online 13 hours, 23 minutes, 1 second

5 Online 13 hours, 22 minutes, 56 seconds

sponge@bob> show chassis fabric redundancy-mode

Fabric redundancy mode: Increased Bandwidth

Deep presentation:

The MPC4e is a trio-based card which uses an enhanced version of the TRIO ASIC. An MPC4e card is made of 2 PFEs. Each PFE is a set of ASICs:

- 1 XMq chip (enhanced Mq chip version (used by MPC1/2 or MPC 16x10GE))

- 2 LU chip

Each PFE has a line-rate around 130Gbits/s depending on the packet size. So each MPC4e card can deliver around 260Gbits/s. But, as I said previously with the current MX960 fabric cards (SCB-E) each PFE can have either 80Gbits/s to and from the fabric in 2+1 mode or 120Gbits/s in 3+0. So it is why I recommend the 3+0 mode with SCB-E cards. In 3+0 SCB-E mode each MPC4e card has around 240Gbits/s to and from the fabric. But, remember that intra PFE traffic doesn’t use the fabric links, so MPC4e performance is really around 2x130Gbits/s. The full capacity of the MPC4e ASICs should be available with SCB-E2 cards.

One important thing to notice:

The PFE has a 130Gb/s total bandwidth capacity, but this bandwidth is divided between two virtual WAN groups of 65Gb/s.

The 32x10GE cards is logically divided in 4 PICs of 8 ports. The PIC 0 and 1 are connected to PFE 0 and PIC 2 and 3 belong to the PFE 1. For a given PFE, for instance PFE0, the group 0, named WAN0, of 65Gbits/s is associated to PIC 0 and the another 65Gbits/s group, WAN1, is associated to PIC1. Each PIC has 80Gbits/s of bandwidth but the remaining bandwidth of one group can be re-allocated to the other. So the PFE capacity of 130Gbits/s is well shared by all the sixteen ports and if you oversubscribe the PFE (more than 130 Gbits/s), you will see equally loss on every sixteen ports.

The case of the combo is different. Indeed a given PFE is associated to 2 virtual PICs. 4x10GE ports belong to the PIC 0 and the 100GE port to the PIC 1. Each PIC is associated to a WAN group with a 65Gbits/s “transmit-rate”. Without oversubscription you can use the 100GE port line-rate because the unused 65Gbits/s of the WAN0 can be consumed by the WAN1 if needed. Nevertheless, if you oversubscribe the 130Gbits/s PFE bandwidth, you will not see proportional loss between the 100GE ports and the 4x10GE ports. Because the 4x10GE belonging to WAN0 will never exceed 40GBits/s (it will always below its 65bits/s “transmit-rate”). But important notice: high priority traffic will not be affected, no matter of input interface.

The 2 next diagrams depict an internal view of the MPC4e combo card and the MPC4e 32x10GE card (It's what I have deduced by playing with PFE commands).

The MPC4e combo card internal representation

The MPC4e 32x10GE card internal representation

Performance results:

We carried out many stress tests on both cards in 3+0 SCB-E mode. Both cards have an ASIC capacity of 260Gbits/s depending on the packet size. Indeed, small packets (around 64B) stress more the ASIC. But this is not a news, it was the case for the previous versions of Juniper ASICs and it is also the case for other vendors.

In case of card oversubscription, you will see the 100GE port looses traffic and the 4x10GE ports almost do not. This is due to the design of the MPC4e PFE's in 2 WAN virtual ports of 65Gbits/s. But remember with a 100GE port and only 3x10GE ports used there is no drop. You can only experience low priority traffic loss on the 100GE port when you add the last 10GE port. This is not the case for 32x10GE traffic, where traffic loss is equally shared among the 10GE ports.

The second important thing to notice is the fabric bottleneck with the current SCB-E cards:

- 2+1 SCB-E mode : fabric BW 80Gbits/s per PFE or 160Gbits/s per MPC4e

- 3+0 SCB-E mode : fabric BW 120Gbits/s per PFE or 240Gbits/s per MPC4e

In practical, with a 3+0 mode we reached a throughput to and from the fabric for an MPC4e around 230Gbits/s for packet from 320Bytes. (over 240Gbits/s (2x120) theoretically available).

In conclusion, the performance of both MPC4e cards is close to what can be read in the datasheet.

What else? Troubleshooting:

During the tests in LAN I also played with the PFE CLI of the MPC4e. I've drawn this picture with some interesting commands (remember: not supported by the JTAC :-) )

David.

Introduction

Recently I carried out tests in labs to evaluate the FlowSpec implementation on MX960 router with TRIO MPC cards. I used a 12.3 Junos release.

Those tests have covered:

- IPv4 blackholing traffic feature

- IPv4 rate-limiting traffic feature

- IPv4 traffic redirection for traffic mitigation (redirect within a VRF)

All tests have been passed with success with just a limitation on traffic redirection presented below. This post presents, how FlowSpec is implemented at RE and PFE level. It presents also the configuration template for Redirecting Traffic through a specific VRF. The “remarking” feature has not been tested.

FlowSpec’s theory

BGP FlowSpec is defined within the RFC 5575: “Dissemination of Flow Specification Rules”. This RFC describes a new NLRI that allows to convey flow specifications (@IP src ; DST ; proto ; port number …) and traffic Action/Rules associated (rate-limiting, redirect, remark …). For IPv4 purposes, RFC defines the following AFI/SAFI value: AFI=1 SAFI=133. FlowSpec uses two existing BGP “containers” to convey both Flow specifications and rules associated.

Indeed, FLOW specifications are encoded within the MP_REACH_NLRI and MP_UNREACH_NLRI attributes. Rules (Actions associated) are encoded in Extended Community attribute.

Within the MP_REACH_NLRI a flow specification is made of a set of components, each component of the flow is combined with each other with an AND operator. Below we describe the different components we have to describe a specific flow (encoded on the NLRI variable field). The Next-Hop length and Next-hop value should be set to 0 (in case of the RFC 5575). Note is should be set other than 0 in this case: BGP Flow-Spec Extended Community for Traffic Redirect to IP Next Hop (draft-simpson-idr-FlowSpec-redirect-02.txt)

NLRI FlowSpec Validation:

FlowSpec BGP update received should pass Validation Steps before their installation from the Adj-RIB-IN to LOC-RIB. Next-Hop validation must not be taken into account, because NH FlowSpec (RFC 5575) is always set to 0. But other Validations have to be done before installation (extracted from RFC):

a) The originator of the flow specification matches the originator of the best-match unicast route for the destination prefix embedded in the flow specification.

b) There are no more specific unicast routes, when compared with the flow destination prefix, that have been received from a different neighboring AS than the best-match unicast route, which has been determined in step a).

These validations can cause some problems when you use for example external Server to inject FlowSpec updates. Implementations may de-activate these validation steps

FlowSpec components ?

Notice from the RFC: “Flow specification components must follow strict type ordering. A given component type may or may not be present in the specification, but if present, it MUST precede any component of higher numeric type value.”

Type 1: Destination prefix component

Type 2: Source prefix component

Type 3: IP Protocol component

The option byte is defined as following:

- E bit: end of option (Must be set to 1 for the last Option)

- A bit: AND bit, if set the operation between several [option/value] is AND, if unset the operation is a logical OR. Never set for the first Option

- Len: If 0 the following value is encoded in 1 byte ; if 1 the following value is encoded in 2 bytes

- Lt bit: less than comparison between the Data and the given value

- Gt bit: greater than comparison between the Data and the given value

- Eq bit: equal comparison between the Data and the given value

Type 4: Port number component

Type 5: Destination port number component

Type 6: Source port number component

Type 7: ICMP Type component

Type 8: ICMP Code component

Type 9: TCP Flags component

The option byte is defined as following:

- E bit: end of option (Must be set to 1 for the last Option)

- A bit: AND bit, if set the operation between several [option/value] is AND, if unset the operation is a logical OR. Never set for the first Option

- Len: If 0 the following value is encoded in 1 byte ; if 1 the following value is encoded in 2 bytes

- NOT bit: logical negation operation between Data and the given value

- m bit: match operation between the Data and the given value

Type 10: Packet Length component

Type 11: DSCP Value component

After the flow definition, Traffic Actions (rules) are encoded as Extended Community Attribute (see RFC 4360)

There are 4 types of “Action”, each of them has a dedicated Extended Community TYPE. The tab below lists the current Actions available:

Traffic-rate action:

Used for discard or rate-limit a specific flow. Discard action is actually a rate equal to zero. The remaining 4 octets carry the rate (in Bytes/sec) information in IEEE floating point [IEEE.754.1985] format. A nice link to troubleshoot encoded rate can be find here: http://www.h-schmidt.net/FloatConverter/IEEE754.html

Traffic-action action:

Used to trigger specific processing the corresponding flow. Only the last 2 Bits of the 6 bytes are currently defined as following:

- Terminal Action (bit 47): When this bit is set, the traffic filtering engine will apply any subsequent filtering rules (as defined by the ordering procedure). If not set, the evaluation of the traffic filter stops when this rule is applied.

- Sample (bit 46): Enables traffic sampling and logging for this flow specification.

Redirect action:

Traffic redirection allows to specify a “route-target” community which will be handled by the router to redirect a Flow to a specific VRF.

Traffic-marking action:

Used to force a flow to be re-writted with a specific DSCP value when it leaves the routers.

Flow Specification and JUNOS

Junos allows 2 kind of Flow Routes. First of all, static flow routes which are configured like this:

#Example: Rate-limiting flow (@IP source 10.1.1.1/32 DNS traffic) at 15Kbits/s

sponge@bob# show routing-options flow

route static-flow1 {

match {

source 10.1.1.1/32;

protocol udp;

port 53;

}

then rate-limit 15k;

}

BGP flow routes family is configured as following. Associated to the flow family you can add a policy-statement to disable the validation process of the route (allows direct installation of FlowSpec routes in the BGP LOC-RIB and bypass the FLOW SPEC routes validation process described in chapter 1)

[edit protocols bgp]

group FLOWSPEC {

type internal;

local-address 10.1.1.3;

family inet {

unicast;

flow {

no-validate NO-VAIDATION;

}

}

neighbor 10.1.1.2 {

description FS-SERVER;

}

}

[edit policy-options]

policy-statement NO-VAIDATION {

term 1 {

then accept;

}

}

Flow routes (BGP or static) are installed within the table “inetflow.0”.

sponge@bob> show route table inetflow.0 detail

inetflow.0: 1 destinations, 1 routes (2 active, 0 holddown, 0 hidden)

*,10.1.1.1,proto=17,port=53/term:2 (1 entry, 1 announced)

*Flow Preference: 5

Next hop type: Fictitious

Address: 0x904d5e4

Next-hop reference count: 2

State: <Active>

Local AS: 65000

Age: 38

Validation State: unverified

Task: RT Flow

Announcement bits (2): 0-Flow 1-BGP_RT_Background

AS path: I

AS path: Recorded

Communities: traffic-rate:0:1875

As I said previously, Next-Hop is always Fictitious (because it is set to 0). The Flow Specification is encoded in an n-turple (the BGP FLOW routes) and rules as an extended-community. Here, the rule type is traffic-rate used for rate-limiting or discarding (rate=0) a specific flow.

Junos then converts this route on a well-known firewall-filter and pushes the filter update on every PFE in input direction. This Firewall Filter is called “__FlowSpec_default_inet__”.

This is not an hidden filter and you can have access to it via the cli command:

sponge@bob> show firewall filter __FlowSpec_default_inet__

Filter: __FlowSpec_default_inet__

Counters:

Name Bytes Packets

*,10.1.1.1,proto=17,port=53 0 0

Policers:

Name Bytes Packets

15K_*,10.1.1.1,proto=17,port=53 0 0

Actually, flow spec firewall filter is a dynamic FW filter which combines in one FW filter all the Flow Specification routes (as a “from” criteria) and their associated rules (as a “then” criteria). Each route can be view as a term.

FlowSpec firewall filter is always the first Firewall Filter analysed. But, you can use after your own Firewall Filter(s) applied in input direction via the command “set interface xxx family inet filter [input|input-list|output|output-list]”. Your FW filter will be always analyzed first before the flow spec FW filter.

Notice: you can never bypass the “__FlowSpec_default_inet__” filter. Also remember that FLOW SPEC Firewall Filter is applied in input direction at PFE level.

At PFE Level you can use this command to check how the “__FlowSpec_default_inet__” is programed.

ADPC0(bob vty)# show filter

Program Filters:

---------------

Index Dir Cnt Text Bss Name

-------- ------ ------ ------ ------ --------

[…]

17000 52 0 4 4 __default_arp_policer__

57008 104 288 16 16 __cfm_filter_shared_lc__

65024 104 144 36 36 __FlowSpec_default_inet__

65280 52 0 4 4 __auto_policer_template__

65281 104 0 16 16 __auto_policer_template_1__

[…]

16777216 104 288 36 36 fnp-filter-level-all

ADPC0(bob vty)# show filter index 65024 program

Program Filters:

---------------

Index Dir Cnt Text Bss Name

-------- ------ ------ ------ ------ --------

65024 104 144 36 36 __FlowSpec_default_inet__

Action directory: 2 entries (104 bytes)

0: accept counter 0 policer 0

-> 7:

1: accept

-> 8:(bss location 3:)

Counter directory: 1 entry (144 bytes)

0: Counter name "*,10.1.1.1,proto=17,port=53": 1 reference

Policer directory: 1 entry (176 bytes)

0: Policer name "15K_*,10.1.1.1,proto=17,port=53": 1 reference

Bandwidth Limit: 1875 bytes/sec.

Burst Size: 15000 bytes.

discard

Program instructions: 9 words

0: match protocol != 17 -> 8:

set icmp-type

match source-port == 53 -> 5:

set destination-port

match destination-port != 53 -> 8:

5: match source-address != 0x0a010101 -> 8:

terminate -> action index 0

8: terminate -> action index 1

As presented in chapter 1 ; the second useful Action type is “redirect”. A redirect action is also encoded in a specific extended community. In Junos you can then use this specific community to redirect a specific traffic within a VRF. Very useful for traffic mitigation or traffic inspection (IDP).

A flow route with the redirect action example:

sponge@bob> show route 10.1.1.0/30 detail

inetflow.0: 1 destinations, 1 routes (1 active, 0 holddown, 0 hidden)

10.1.1.0,*,proto=17/term:1 (1 entry, 1 announced)

*BGP Preference: 170/-101

Next hop type: Fictitious

Address: 0x9041484

Next-hop reference count: 1

State: <Active Int Ext>

Local AS: 65000 Peer AS: 65000

Age: 48

Validation State: unverified

Task: BGP_65000.81.253.192.94+31919

Announcement bits (1): 0-Flow

AS path: ?

AS path: Recorded

Communities: redirect:65000:123456

Accepted

Localpref: 100

Router ID: 81.253.192.94

The only limitation on Junos when you redirect traffic is that the server which will be in charge of mitigation or inspection or anything else must not be directly connected to the same router attached to the VRF. Indeed, Junos pushes FlowSpec routes towards all PFE. So if you dynamically redirect a traffic to a server to perform “traffic processing”, the “coming back” traffic will be again redirect and so on… In this configuration you will experience a forwarding loop.

The diagram below shows this limitation and 2 workarounds (design based).

On Junos traffic redirection is configured as following:

[edit protocols bgp]

group FLOWSPEC {

type internal;

local-address 10.1.1.3;

family inet {

unicast;

flow {

no-validate NO-VAIDATION;

}

}

neighbor 10.1.1.2 {

description FS-SERVER;

}

}

[edit policy-options]

policy-statement NO-VAIDATION {

term 1 {

from community redirect;

to instance PROCESSING-VRF;

then accept;

}

term 2 {

then accept;

}

}

community redirect members redirect:65000:123456;

[edit routing-instances]

PROCESSING-VRF {

instance-type vrf;

interface ge-2/2/1.0;

route-distinguisher 12.2.2.2:1234;

vrf-target target:65000:123456;

routing-options {

static {

defaults {

resolve;

}

# DEFAULT route if Server in charge of processing traffic is directly attached

# to the VRF

route 0.0.0.0/0 next-hop 10.128.2.10;

}

}

}

David.

Last week, I worked on 2 small projects in 11.4 Junos. I should:

- Find a way to automatically disable a physical link when LACP "flapped" too many times during a given time.

- Find a way to automatically disable a physical link when too many CRC errors are observed during a given time.

1/ LACP DAMPENING:

Currently vendors do not provide LACP dampening mechanism. We are currently writing RFE (Request For Enhancement) to implement feature.

But I couldn't wait at least one year to have a kind of dampening LACP feature on my Junos. So, I tried to implement this feature by using event-policy.

Event-policy are handled by Eventd process. Eventd can trigger actions (operational and configuration cmds) on reception of events: events are "Syslog" generated by all other processes. Eventd can also call some specific scripts named event-scripts to perform multiple actions (show cmds, commit some changes, syslog generation...). This picture shows the concept:

So, to build my feature I used this event policy:

event-options {

policy LACP-DAMP {

events KERNEL;

within 120 {

trigger after 6;

}

attributes-match {

KERNEL.message matches KERN_LACP_INTF_STATE_CHANGE;

}

then {

event-script lacp-damp.slax;

}

}

event-script {

file lacp-damp.slax;

}

}

The event policy works like that: If eventd "sees" 4 lacp down events for a given interface during less 60 seconds, it calls the script lacp-damp.slax

Note, the script lacp-damp.slax must be pushed on both RE in the folder /var/db/scripts/event before committing the above event-policy.

The lacp-damp.slax code is available here. It is detailed below:

/* -------------------------------------------------*/

/* Slax written by door7302@gmail.com Version 1.0 */

/* -------------------------------------------------*/

version 1.0;

ns junos = "http://xml.juniper.net/junos/*/junos";

ns xnm = "http://xml.juniper.net/xnm/1.1/xnm";

ns jcs = "http://xml.juniper.net/junos/commit-scripts/1.0";

import "../import/junos.xsl";

match / {

var $regex1="is 0 ";

var $regex2="DETACHED";

var $sep1 = "( )";

/* Retrieve the entire syslog */

var $message = event-script-input/trigger-event/attribute-list/attribute[name=="message"]/value;

/* Find1 is a < junos 12.3 syslog and Find2 12.3 or > syslog */

var $find1 = jcs:regex($regex1,$message);

var $find2 = jcs:regex($regex2,$message);

if ($find1 !="" || $find2 !="" )

{

var $mytab1 = jcs:split ($sep1,$message);

if ($find1 !="") {

var $iif = $mytab1[8];

/* Now create the config environment */

var $configuration-rpc = {

<get-configuration database="committed" inherit="inherit">;

<configuration> {

<interfaces>;

}

}

/* Open the connection to enter the config */

var $connection = jcs:open();

var $configuration = jcs:execute( $connection, $configuration-rpc );

/* Define what you want to change : here disable the $iif interface */

var $change = {

<configuration> {

<interfaces> {

<interface> {

<name> $iif;

<disable>;

}

}

}

}

/* commit the config and retrieve the result of the commit */

var $results := { call jcs:load-configuration( $connection, $configuration=$change ); }

/* If commit failed, generate a specific syslog in messages */

if( $results//xnm:error ) {

for-each( $results//xnm:error ) {

expr jcs:syslog(25, "NEW event-script LACP-DAMP Error, failed to commit..." );

}

}

/* if commit passed, generate a human readable syslog in messages */

else {

var $new_message = "Event-script LACP-DAMP: LACP on " _ $iif _ " flapped too many times. Disable it by config";

expr jcs:syslog(25, $new_message);

}

/* close the connection. End of the script */

expr jcs:close( $connection );

}

if ($find2 !="") {

var $iif = $mytab1[4];

/* Now create the config environment */

var $configuration-rpc = {

<get-configuration database="committed" inherit="inherit">;

<configuration> {

<interfaces>;

}

}

/* Open the connection to enter the config */

var $connection = jcs:open();

var $configuration = jcs:execute( $connection, $configuration-rpc );

/* Define what you want to change : here disable the $iif interface */

var $change = {

<configuration> {

<interfaces> {

<interface> {

<name> $iif;

<disable>;

}

}

}

}

/* commit the config and retrieve the result of the commit */

var $results := { call jcs:load-configuration( $connection, $configuration=$change ); }

/* If commit failed, generate a specific syslog in messages */

if( $results//xnm:error ) {

for-each( $results//xnm:error ) {

expr jcs:syslog(25, "NEW event-script LACP-DAMP Error, failed to commit..." );

}

}

/* if commit passed, generate a human readable syslog in messages */

else {

var $new_message = "Event-script LACP-DAMP: LACP on " _ $iif _ " member of " _ $lag _ " flapped too many times. Disable it by config";

expr jcs:syslog(25, $new_message);

}

/* close the connection. End of the script */

expr jcs:close( $connection );

}

}

}

When commit is passed this kind of syslog is generated:

Apr 15 15:35:13 MXbob cscript: Event-script LACP-DAMP: LACP on xe-0/0/0 flapped too many times. Disable it by config

2/ Auto-disable when CRC errors

Framing errors (aka. CRC errors) degrade the quality of the services, and we usually want to remove traffic on links that experience these kind of errors. OAM LFM provides a way to automatically trigger Link_DOWN event when a given amount of framing or symbol errors is detected on a link. But, currently on Junos we can trigger action only when a link experiences a rate of 10-1 framing error. This if often too late. What I want is to automatically shutdown a link when we reach a rate of 10-5 or 10-6 framing errors.

To build this feature I used 2Junos features: event-policy and a rmon probe. RMON is a Remote Monitoring feature which was described in RFC 2021, 2613 and 2819. This feature is actually an internal SNMP agent which can poll the internal MIB objects (SNMP get) or Tables (SNMP walk) and trigger syslog and/or trap when a given value of the MIB reaches a configurable threshold between 2 pollings.

The probe raised an event when a "rising threshold" is reached and can generate a second event when a "falling threshold" is reached. The following graph explains the concept:

The RMON probe is configured like this. I use the walk request type to automatically create an instance for each object of the table IfJnxInFrameErrors (CRC error for each physical interface) .

snmp {

rmon {

alarm 1 {

description "CRC monitoring";

interval 30;

variable ifJnxInFrameErrors;

sample-type delta-value;

request-type walk-request;

rising-threshold 360;

falling-threshold 0;

rising-event-index 1;

falling-event-index 2;

syslog-subtag CRC_WARN;

}

event 1 {

description "Generate Event - Too many CRC";

type log;

}

event 2 {

description "Generate Event - No more CRC";

type log;

}

}

}

Every 30s the SNMP agent polls the FrameError mib and computes for each IFindex the delta framing errors since the last 30 secs. If this value is upper than 360 (rising threshold) it triggers a specific syslog:

Apr 15 16:52:29 MXbob snmpd[62774]: SNMPD_RMON_EVENTLOG: CRC_WARN: Event 1 triggered by Alarm 1, rising threshold (360) crossed, (variable: ifJnxInFrameErrors.820, value: 7395)

Then if for a given IFindex the delta framing errors falls to zero the second syslog is generated:

Apr 15 16:53:44 MXbob snmpd[62774]: SNMPD_RMON_EVENTLOG: CRC_WARN: Event 2 triggered by Alarm 1, falling threshold (0) crossed, (variable: ifJnxInFrameErrors.820, value: 0)

Here I set a rising threshold to 360 frames. I computed this value like that: for a 10Ge interface if I have a half oaded link (5Gbits/s) and an average packet size of 512Bytes, I should receive around 36M of packets in 30 seconds (window time configured). If I want to shutdown the link when the link experiences 10-6 corrupted frames; the number of CRC errors in 30 seconds should be around 360.

Now, I've generated my events (syslog), so I can again use an event-policy which will track the "rising event" and then call a dedicated event script that will perform actions.

The event policy is the following:

event-options {

policy RMON-CRC {

events snmpd_rmon_eventlog;

then {

event-script rmon-crc.slax;

}

}

event-script {

file rmon-crc.slax;

}

}

Again, the script rmon-crc.slax must be pushed on both RE in the folder /var/db/scripts/event before committing the above event-policy.

The rmon-crc.slax script is available here. And detailed below:

/* ------------------------------------------------*/

/* Slax written by door7302@gmail.com Version 1.0 */

/* ------------------------------------------------*/

version 1.0;

ns junos = "http://xml.juniper.net/junos/*/junos";

ns xnm = "http://xml.juniper.net/xnm/1.1/xnm";

ns jcs = "http://xml.juniper.net/junos/commit-scripts/1.0";

import "../import/junos.xsl";

match / {

/* Init some variables used to parse the syslog message */

var $regex1="rising";

var $regex2="falling";

var $sep1 = "(,)";

var $sep2 = "\\.";

/* Retrieve the entire syslog */

var $message = event-script-input/trigger-event/attribute-list/attribute[name=="message"]/value;

/* Find1 is a rising syslog and Find2 the falling syslog */

var $find1 = jcs:regex($regex1,$message);

var $find2 = jcs:regex($regex2,$message);

/* IF I received a RMON rising syslog */

if ($find1 !="") {

/* Retrieve the ifindex value from the syslog */

var $mytab1 = jcs:split ($sep1,$message);

var $mytab2 = jcs:split ($sep2,$mytab1[3]);

var $ifindex = $mytab2[2];

/* Seek the interface name based on the ifindex */

var $myrpc1 = <get-interface-information> {

<snmp-index> $ifindex;

}

var $iif-info = jcs:invoke ($myrpc1);

var $iif = $iif-info/physical-interface/name;

/* Init the config. environment */

var $configuration-rpc = {

<get-configuration database="committed" inherit="inherit">;

<configuration> {

<interfaces>;

}

}

/* Open the config. connection */

var $connection = jcs:open();

var $configuration = jcs:execute( $connection, $configuration-rpc );

/* create the config change template */

var $change = {

<configuration> {

<interfaces> {

<interface> {

<name> $iif;

<disable>;

}

}

}

}

/* Try to commit the change and retrieve the result */

var $results := { call jcs:load-configuration( $connection, $configuration=$change ); }

/* If there is an error: generate a specific syslog */

if( $results//xnm:error ) {

for-each( $results//xnm:error ) {

expr jcs:syslog(25, "NEW event-script RMON-CRC: Error, failed to commit..." );

}

}

/* If commit passed, generate a human readable syslog */

else {

var $new_message = "Event-script RMON-CRC: Too many CRC errors on " _ $iif _ " Disable it by config";

expr jcs:syslog(25, $new_message);

}

/* close the connection */

expr jcs:close( $connection );

}

/* If RMON falling syslog is received */

/* Just generate a human readable syslog */

if ($find2 !="") {

var $mytab1 = jcs:split ($sep1,$message);

var $mytab2 = jcs:split ($sep2,$mytab1[3]);

var $ifindex = $mytab2[2];

var $myrpc1 = <get-interface-information> {

<snmp-index> $ifindex;

}

var $iif-info = jcs:invoke ($myrpc1);

var $iif = $iif-info/physical-interface/name;

var $new_message = "Event-script RMON-CRC: No more CRC error on " _ $iif;

expr jcs:syslog(25, $new_message);

}

}

When commit is passed this kind of syslog is generated:

Apr 16 10:02:24 MXbob cscript: Event-script RMON-CRC: Too many CRC errors on xe-1/3/3 Disable it by config

David.

IS-IS Screams,

BGP peer flapping:

I want my mommy!